Resources to inform about the projection environment

The Question

Video projectors have multiplied over the past ten years, whether for lectures, presentations of research work, presentations in companies.

The projection conditions are very variable, whether on the projection ratio (4/3 or 16/9), the size of the screen on which the image is projected, or the definition of the projector (HD, 4K).

The size of the projection room is also variable. They rarely communicate this information to the speaker, which should be the case so that he/she can adapt the content of his presentation to this environment.

I thus recently wondered what would be the best way both to communicate about this environment but also how to evaluate it.

The Choice

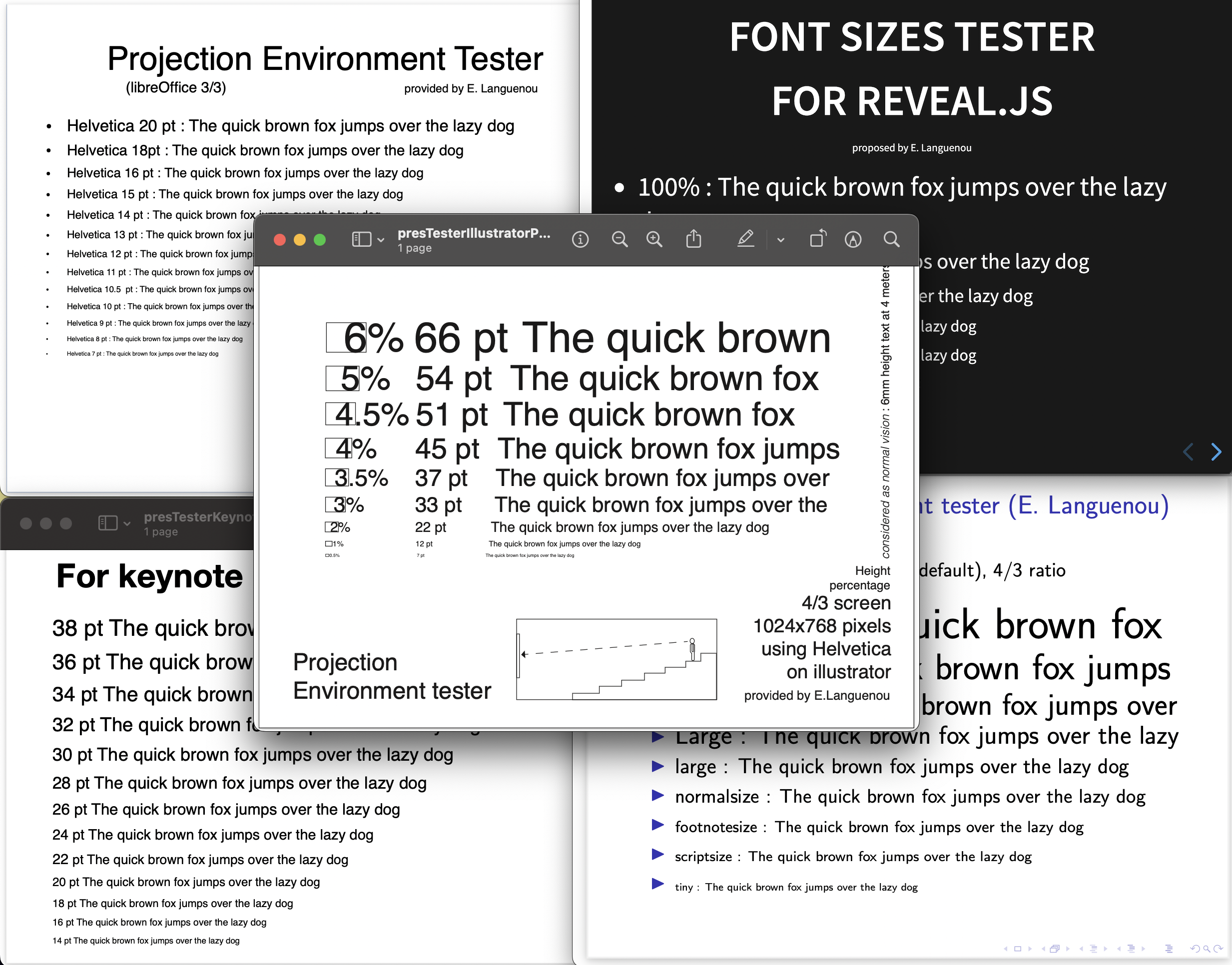

It is considered that a person with normal vision can decipher text 6mm high from 4m away, which gives us a limited visual angle for easy reading. Thus, the communication of the angle formed by the screen, for a spectator at the back of the room, would suffice to deduce the minimum size of the texts appearing on the screen.

But few of us have a tool that makes it easy to measure a visual angle from our eye. We could calculate it using the distance to the screen and the vertical size of the screen. Anyway, accessing this information could be a bit of a pain.

The Resources

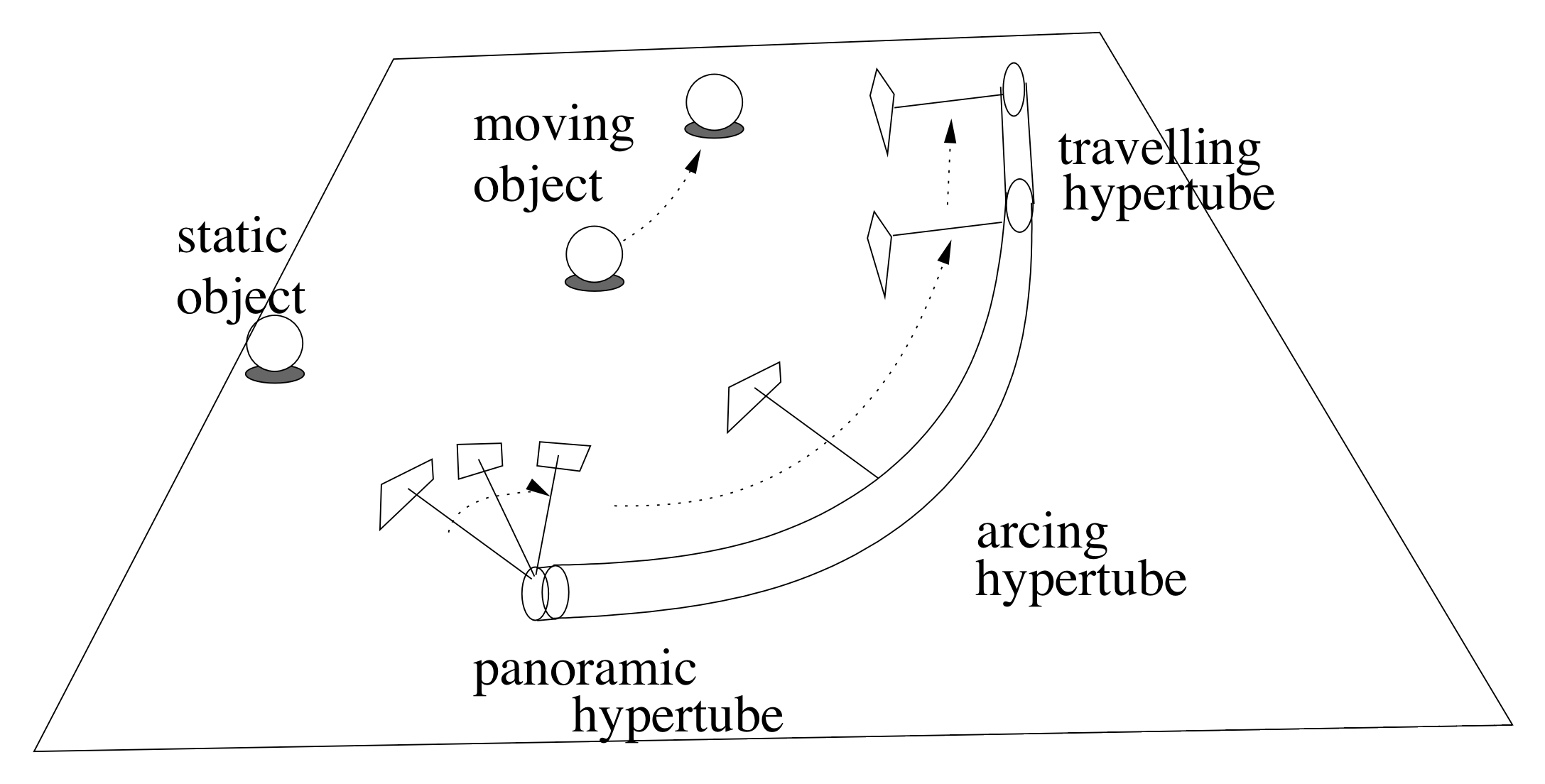

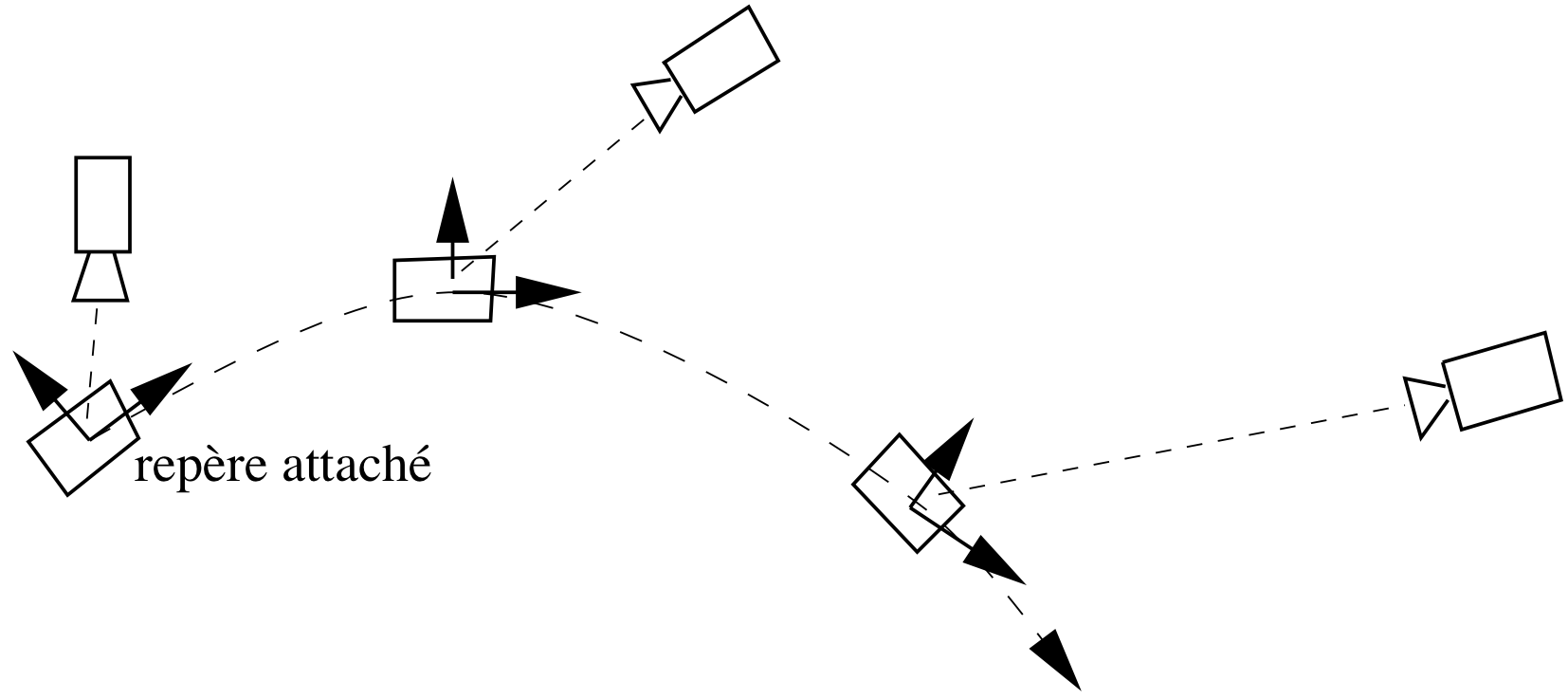

Hence, the convergence towards the creation of projection environment test pages, made for various presentation creation tools and for the two projection ratios (4/3 and 16/9):

-

beamer (presentation in latex, a text compiler)

-

libreoffice

-

keynote (apple)

-

reveal (revealjs, a web-browser based presentation tool)

-

a page created in illustrator which presents variable text sizes with the associated percentages in terms of page height.

To begin with, I used a single font, helvetica, although there are variations in appearance between fonts.

The Method

The person organizing the conference or the speaker if he/she has access to the room before the presentation can thus display the pages and visually find out from the back of the room the minimum usable text size.

It is then easy to use it or communicate it, depending on the rooms envisaged.

To download all the pages (6Mo), download

To get only some pages :

-

reveal js page 4/3 multi Taille , page 4/3 H tags , page 4/3 p tag , page 16/9 multi Taille , page 16/9 H tags , page 16/9 p tag

]]>